-

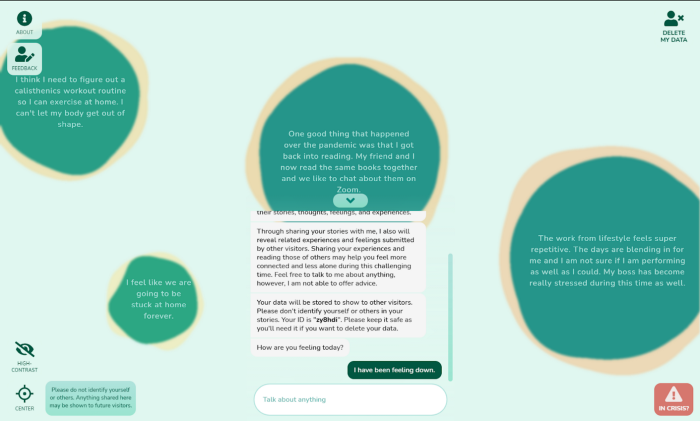

Covid Connect: Chat-Driven Anonymous Story-Sharing for Peer Support

-

Card-IT Language Learning

A novel approach to training and testing Italian verb morphology by developing a flashcard application with a finite-state morphological (FSM) analyzer which both analyzes a user’s input and dynamically generates specific verb forms (flashcards).

-

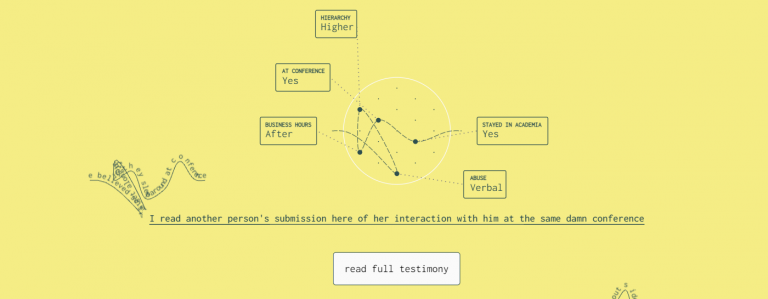

Academia is Tied in Knots

A data visualization project aimed at giving visibility to the issue of sexual harassment in the academic community.

-

Tilt-Responsive Techniques for Digital Drawing Boards

An investigation into a range of app-specific transitions on tilt-adjustable drawing boards in response to changes in display angle such as reading vs. writing (annotation), public vs. personal, and shared person-space vs. task-space, among others.

-

Textension: Digitally Augmenting Document Spaces in Analog Texts

A framework that allows people who work with analog texts to leverage the affordances of digital technology, such as data visualization, computational linguistics, and search, using any web-based mobile device with a camera.

-

Eye Tracking for Target Acquisition in Sparse Visualizations

A novel marker-free method for identifying screens of interest when using head-mounted eye-tracking for visualization in cluttered and multi-screen environments.

Vialab is led by Dr. Christopher Collins at Ontario Tech University. This site serves as a home for our research and news about the lab. Check out our Github for software projects related to our research and our Medium account to keep up with our blog. Details about our various research projects can be found on our Research page.

Research Blog

-

Professional Differences: How Disciplinary Training Affects Visualization Task Performance

Empowering people across all disciplines and professions to understand their data is the enduring promise of visualization. When designing a visualization for a specific industry or discipline, visualization designers will consider the nature of the data and how it is used by that specific disciplin ...Read More

-

Parallel Tag Clouds: A Look Back at One of Our Most Influential Papers

In this blog post, we take a look back at an older paper of ours titled Parallel Tag Clouds to Explore and Analyze Faceted Text Corpora, and explore how it has influenced data visualization research since it was published. Parallel Tag Clouds was presented at the IEEE Conference on Visual Analytics ...Read More

-

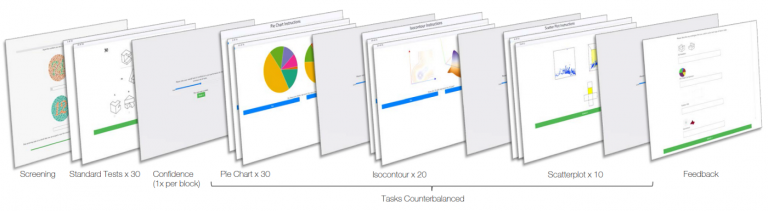

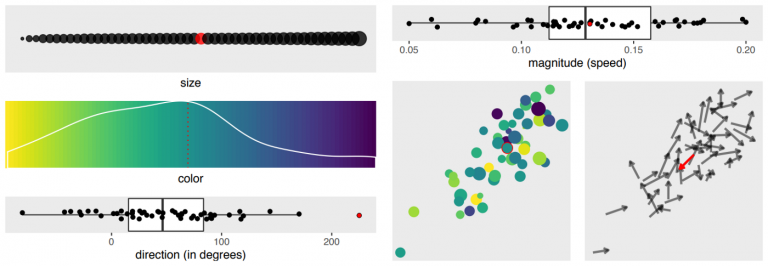

Effects of Multiple Visual Channels on Outlier Detection: Why animated scatterplots are not well suited for data discovery

Data visualization is the practice of mapping data variables to visual variables, or visual dimensions. Despite only having two spatial dimensions to work with on a normal screen, data scientists often seek to cram as many data variables as possible into a single visualization using other visual dim ...Read More

-

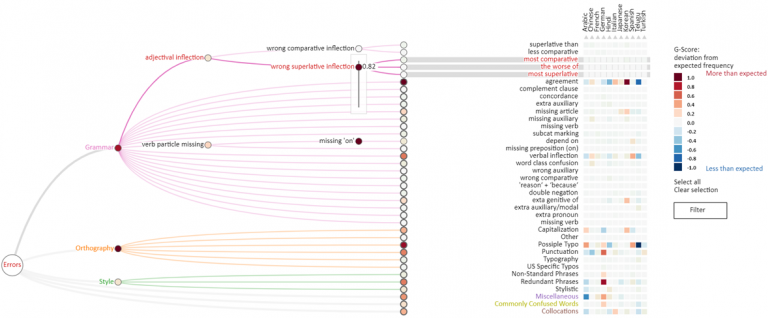

Visualizing Language Transfer Effects in Large Learner Corpora

Second Language Acquisition (SLA) is a research field that studies the process of learning another language. Language transfer effects occur when the learner applies structural, grammatical, or semantic rules from their native language to the language they are trying to learn. For example, a native ...Read More

-

Covid Connect: Enabling anonymous peer-based mental health support with artificial intelligence and visualization

It’s been well over a year since the COVID-19 pandemic spread across the globe, prompting various measures from governments and private-sector entities alike. One of the first responses to the pandemic came in the form of wide-spread implementation of work-from-home and learn-from-home policies. Gov ...Read More

-

Visualizing sexual harassment in academia: How researchers conveyed the bigger picture behind the data without compromising the individual significance of the stories.

Sexual harassment in academia is an unusually overlooked component of the greater societal conversation around sexual assault and harassment. This is especially puzzling when you consider the intense power dynamics that exist in academic institutions, as well as the non-academic aspects of universit ...Read More

For more posts from our research blog, check out our Medium Page.

Gallery

News & Updates

- Vialab members to have largest ever presence at the IEEE VIS Conference

- Vialab members presenting award-winning work at the ACM CHI Conference on Human Factors in Computing Systems

- Vialab member Menna El-Assady to present ‘ThreadReconstructor: Modeling Reply-Chains to Untangle Conversational Text through Visual Analytics’ at EuroVis 2018 in Brno.

- 2016 Course Materials

- vialab hosted talk: Dr. Nathalie Henry Riche, Microsoft Research

- Vialab contributions to IEEE VIS 2017